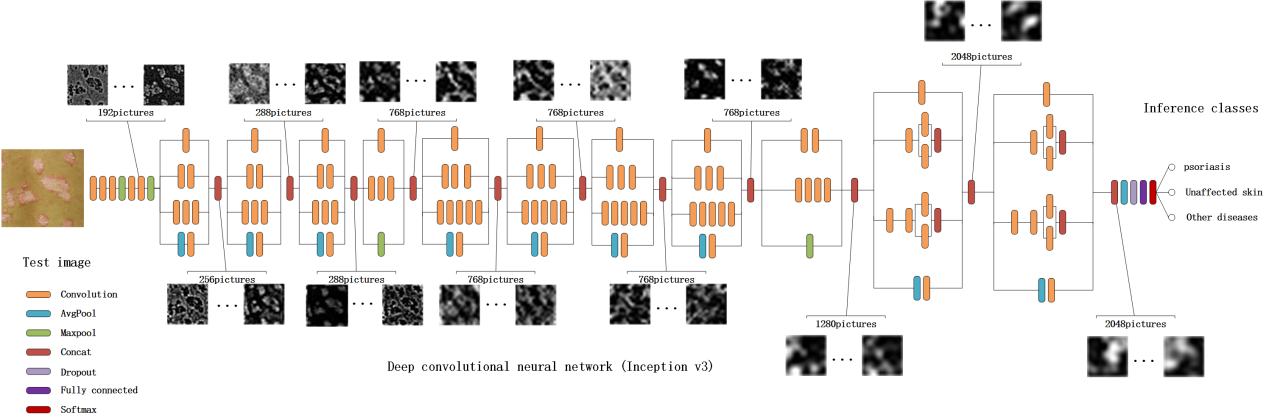

Inception-V3 does not use Keras’ Sequential Model due to branch merging (for the inception module), hence we cannot simply use model.pop() to truncate the top layer. The process is mostly similar to that of VGG16, with one subtle difference. The code for fine-tuning Inception-V3 can be found in inception_v3.py. Schematic Diagram of the 27-layer Inception-V1 Model (Idea similar to that of V3): The model is characterized by the usage of the Inception Module, which is a concatenation of features maps generated by kernels of varying dimensions. Inception-V3 achieved the second place in the 2015 ImageNet competition with a 5.6% top 5 error rate on the validation set. predict ( X_valid, batch_size = batch_size, verbose = 1 ) score = log_loss ( Y_valid, predictions_valid )įine-tune Inception-V3. After it is done, we use the model the make prediction on the validation set and return the score for the cross entropy loss: predictions_valid = model. The fine-tuning process will take a while, depending on your hardware. fit ( train_data, test_data, batch_size = batch_size, nb_epoch = nb_epoch, shuffle = True, verbose = 1, validation_data = ( X_valid, Y_valid ), ) Next, we load our dataset, split it into training and testing sets, and start fine-tuning the model: X_train, X_valid, Y_train, Y_valid = load_data () model. For colored image with resolution 224x224, img_rows = img_cols = 224, channel = 3.

Where img_rows, img_cols, and channel define the dimension of the input. compile ( optimizer = sgd, loss = 'categorical_crossentropy', metrics = ) model = vgg_std16_model ( img_rows, img_cols, channel, num_class ) sgd = SGD ( lr = 1e-3, decay = 1e-6, momentum = 0.9, nesterov = True ) model. Notice that we use an initial learning rate of 0.001, which is smaller than the learning rate for training scratch model (usually 0.01). We then fine-tune the model by minimizing the cross entropy loss function using stochastic gradient descent (sgd) algorithm. This can be done by the following lines: for layer in model. Say we want to freeze the weights for the first 10 layers. Sometimes, we want to freeze the weight for the first few layers so that they remain intact throughout the fine-tuning process. Where the num_class variable in the last line represents the number of class labels for our classification task. add ( Dense ( num_class, activation = 'softmax' )) load_weights ( 'cache/vgg16_weights.h5' )įor fine-tuning purpose, we truncate the original softmax layer and replace it with our own by the following snippet: model. After defining the fully connected layer, we load the ImageNet pre-trained weight to the model by the following line: model. The first part of the vgg_std16_model function is the model schema for VGG16. The script for fine-tuning VGG16 can be found in vgg16.py. The model achieves a 7.5% top 5 error rate on the validation set, which is a result that earned them a second place finish in the competition. VGG16 is a 16-layer Covnet used by the Visual Geometry Group (VGG) at Oxford University in the 2014 ILSVRC (ImageNet) competition.

With that, you can customize the scripts for your own fine-tuning task.īelow is a detailed walkthrough of how to fine-tune VGG16 and Inception-V3 models using the scripts.įine-tune VGG16. Implementations of VGG16, VGG19, GoogLeNet, Inception-V3, and ResNet50 are included. The scripts are hosted in this github page.

I have implemented starter scripts for fine-tuning convnets in Keras. I would recommend GTX 980Ti or a slightly expensive GTX 1080 which cost around $600 bucks. We are talking about a matter of hours with a GPU versus a matter of days with a CPU.

The speed difference is very substantial. I would strongly suggest getting a GPU to do the heavy computation involved in Covnet training. It also comes with a great documentation and tons of online resources. Unless you are doing some cutting-edge research that involves customizing a completely novel neural architecture with different activation mechanism, Keras provides all the building blocks you need to build reasonably sophisticated neural networks. Keras is a simple to use neural network library built on top of Theano or TensorFlow that allows developers to prototype ideas very quickly. This post will give a detailed step-by-step guide on how to go about implementing fine-tuning on popular models VGG, Inception, and ResNet in Keras. Part I states the motivation and rationale behind fine-tuning and gives a brief introduction on the common practices and techniques. This is Part II of a 2 part series that cover fine-tuning deep learning models in Keras. A Comprehensive guide to Fine-tuning Deep Learning Models in Keras (Part II)

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed